NewsGenie: AI-Powered News Chatbot

Complete Technical Report

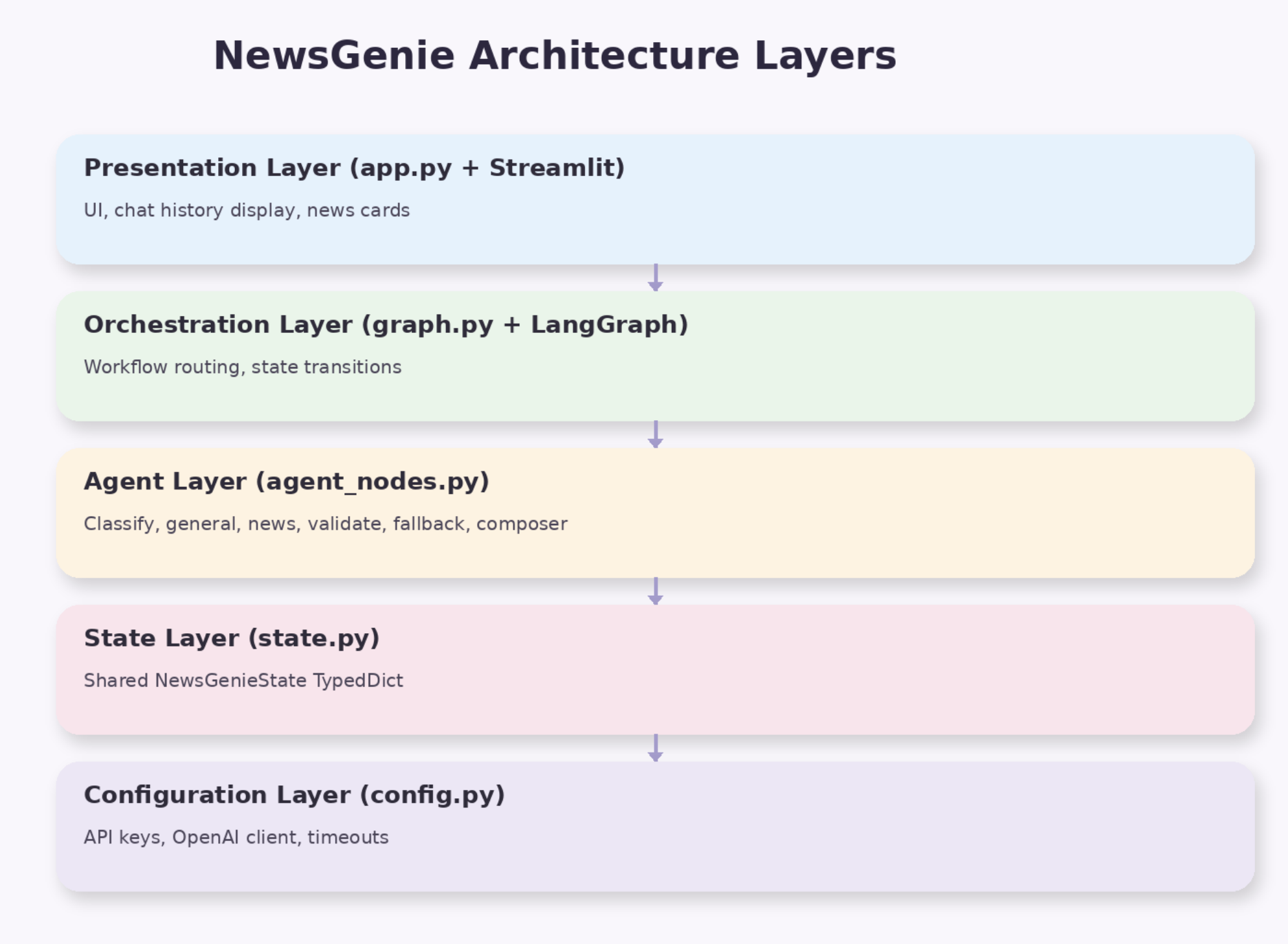

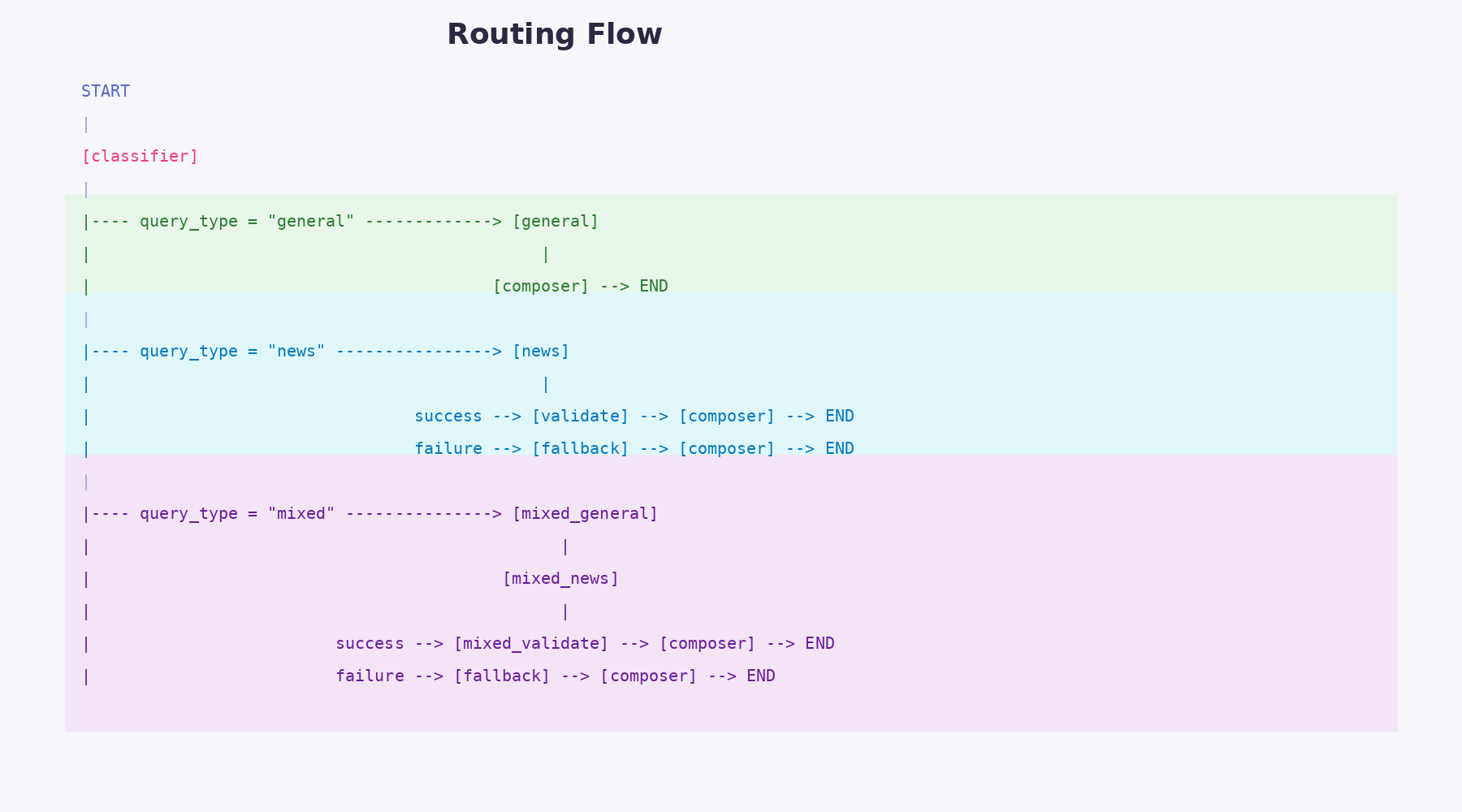

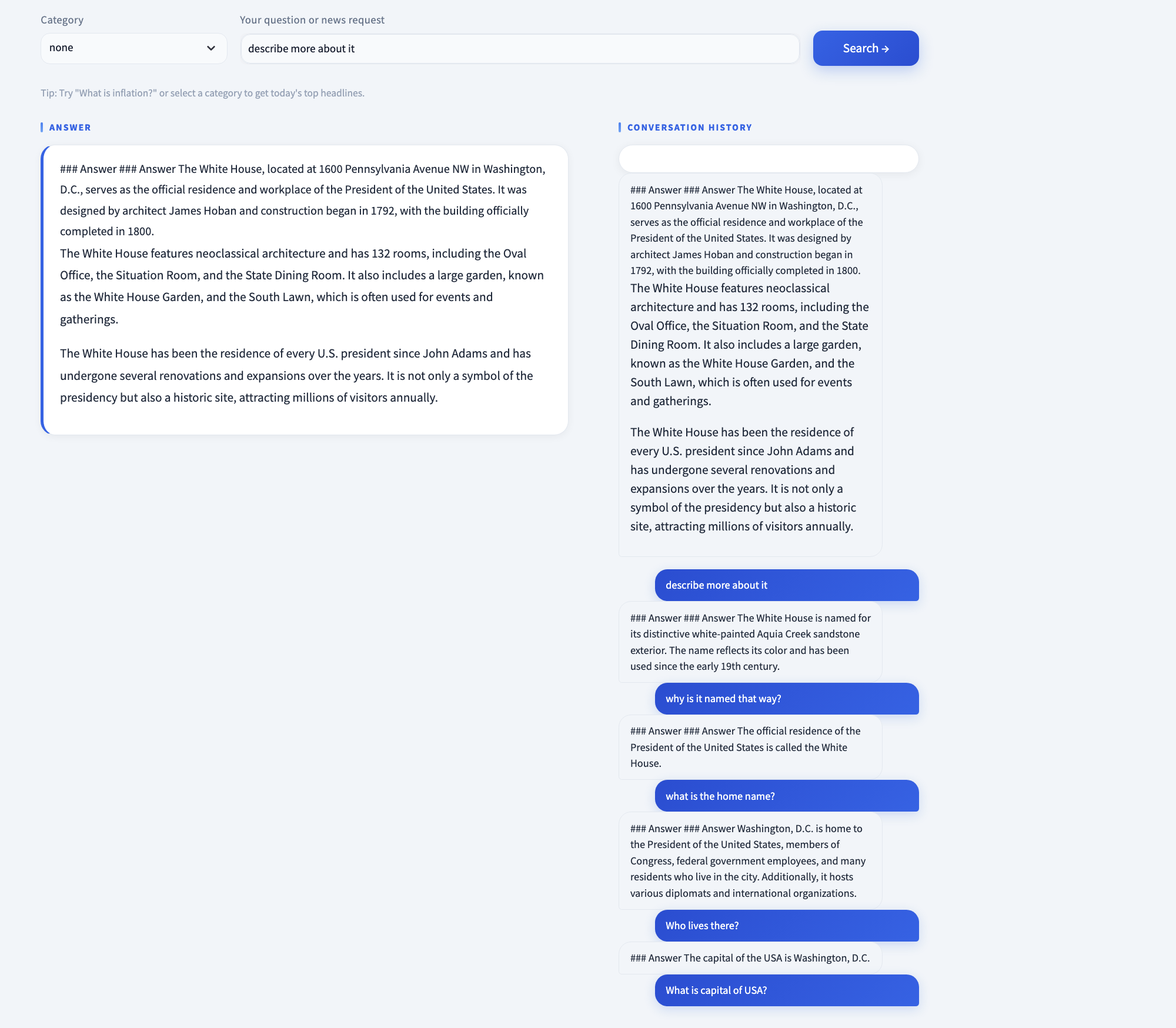

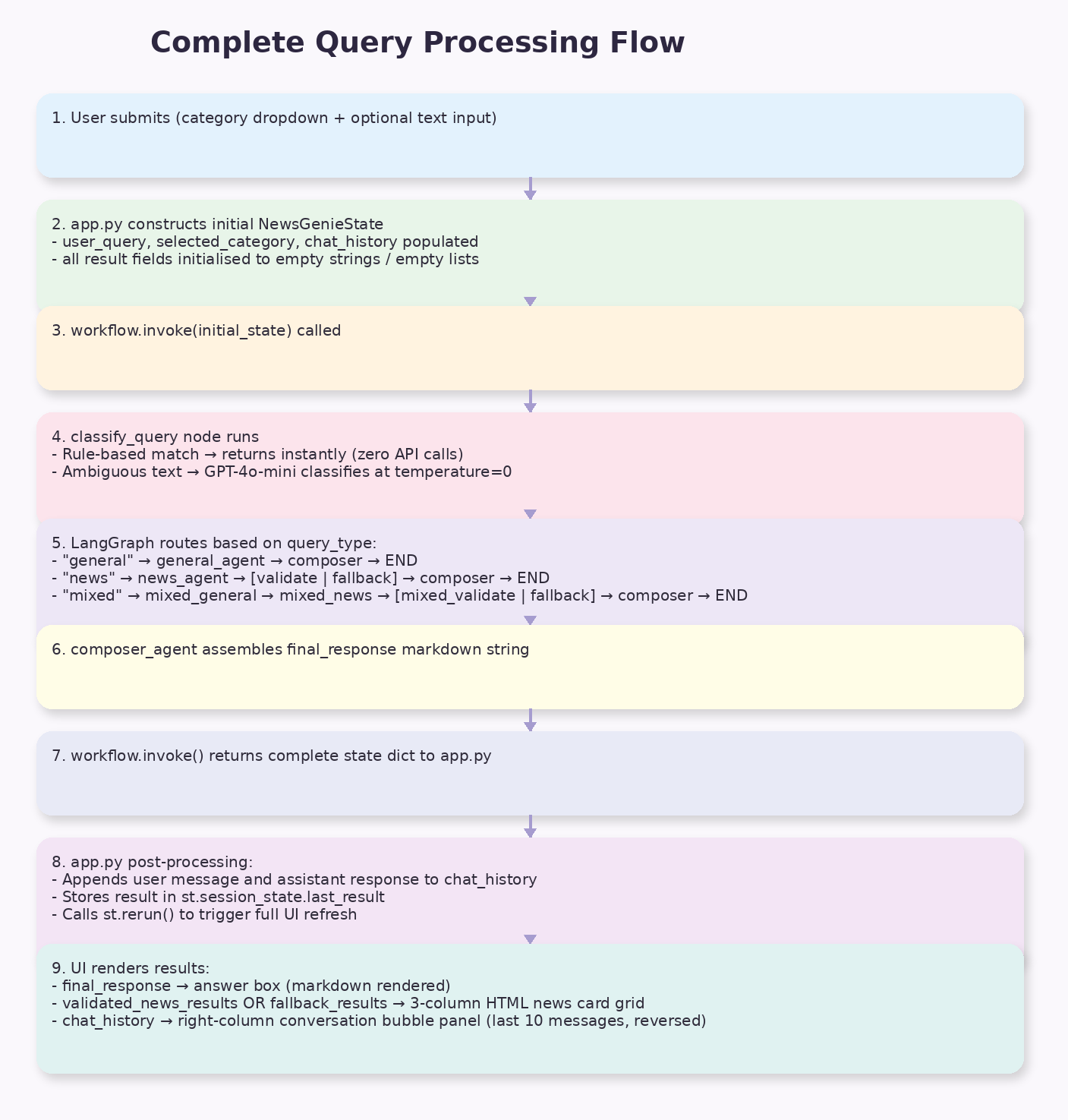

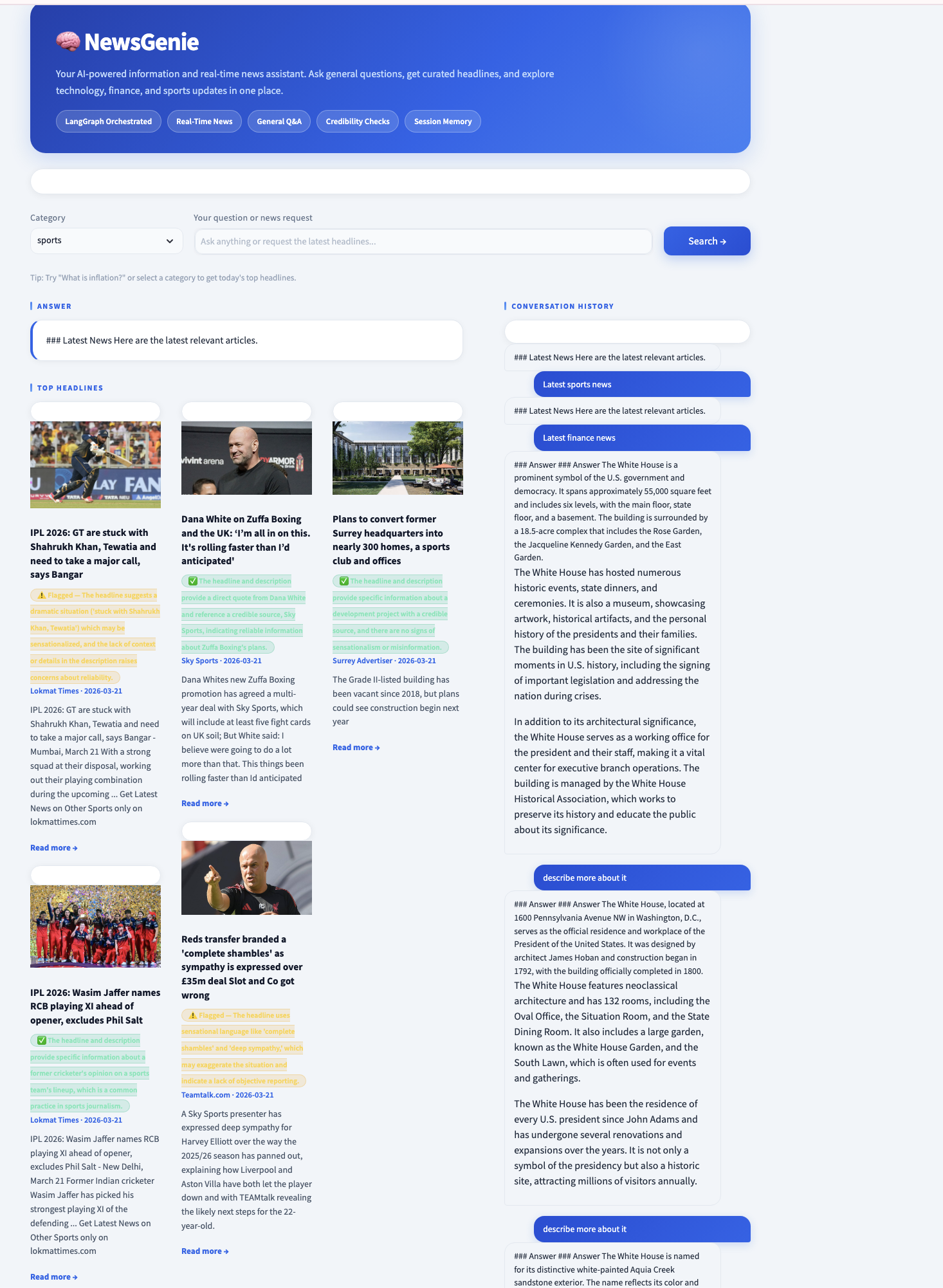

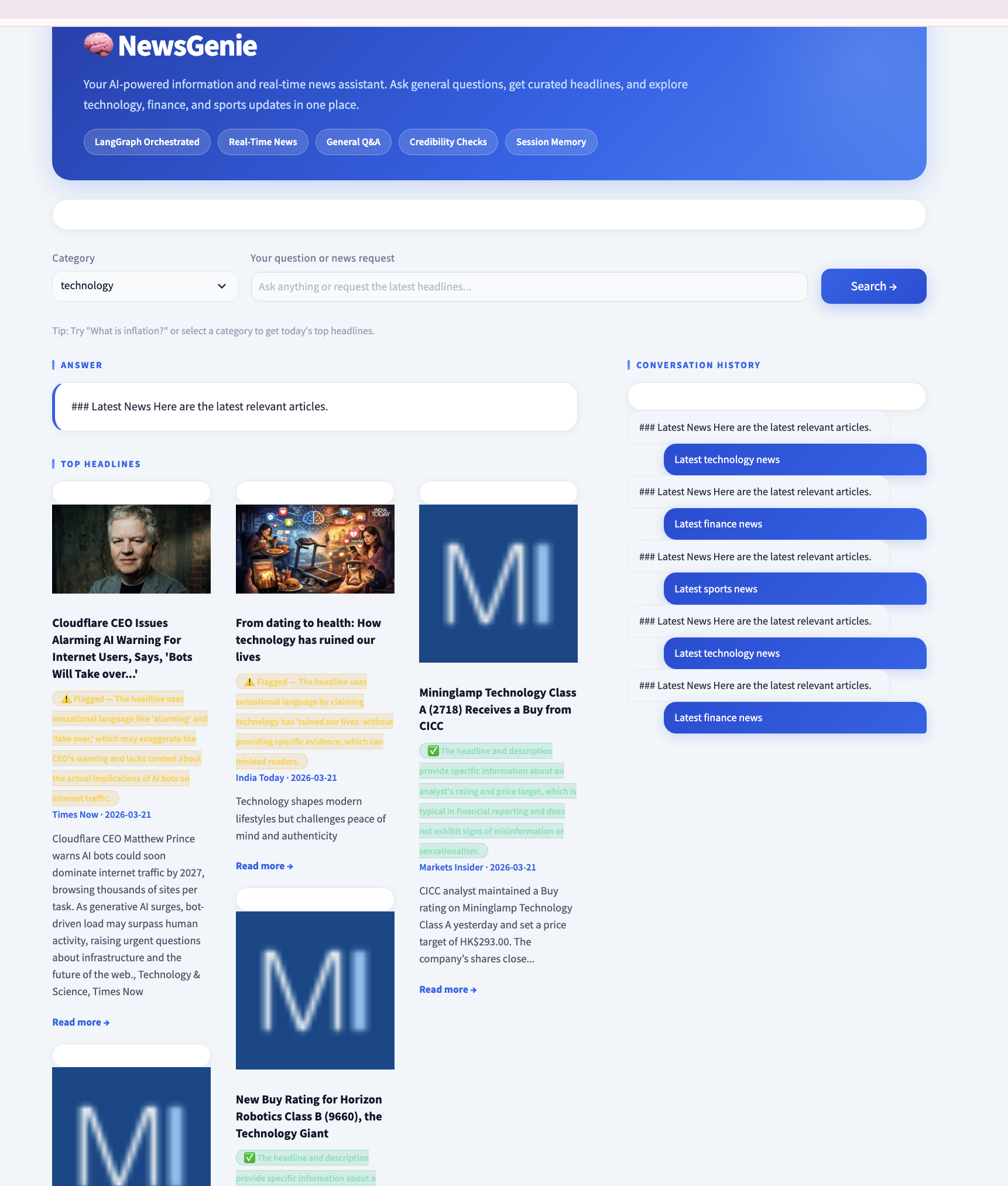

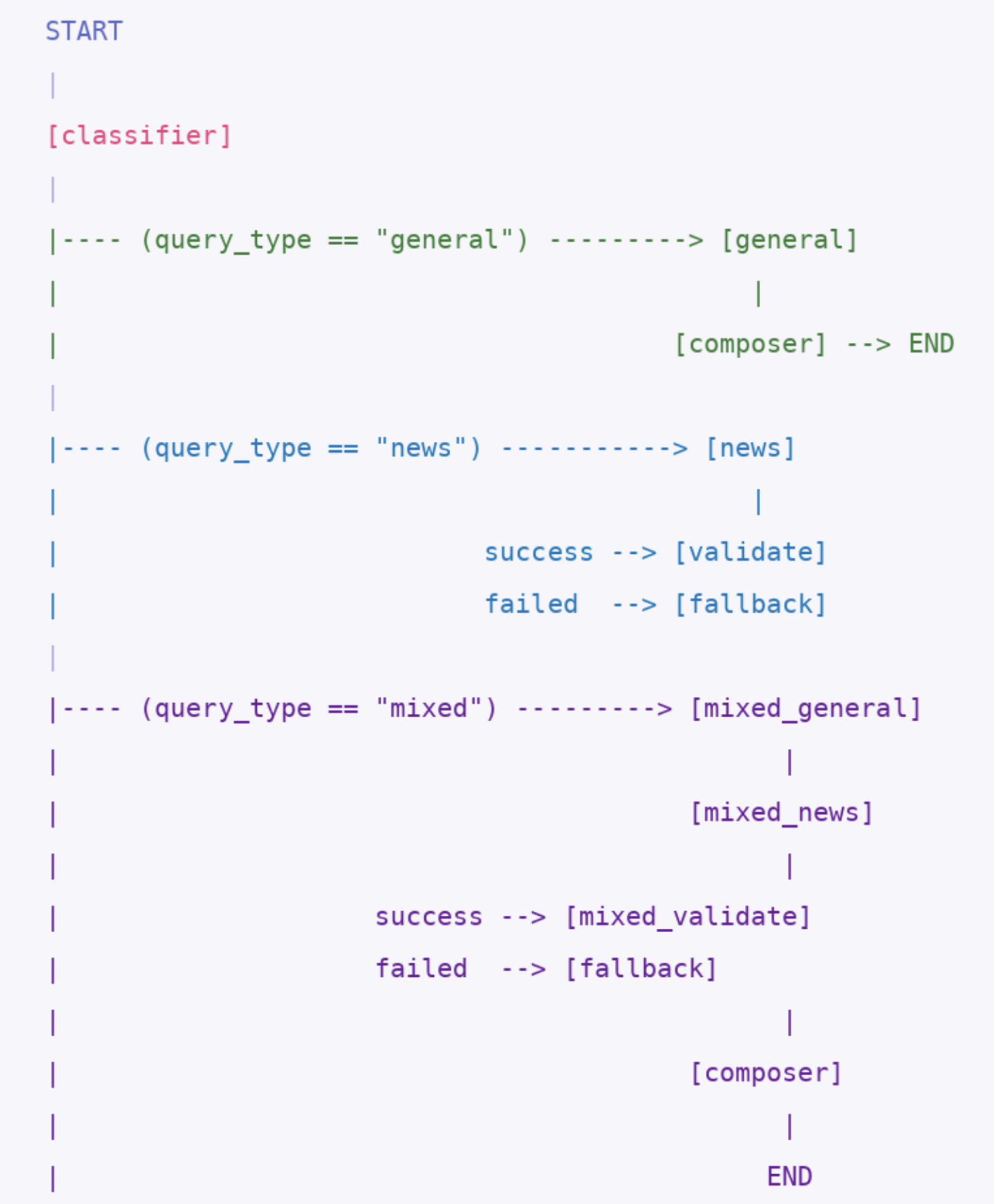

Project: NewsGenie — Real-Time AI News Chatbot NewsGenie is an AI-powered news chatbot that combines large language model reasoning with real-time news retrieval to deliver contextually accurate, up-to-date answers to user queries. Rather than relying on a single monolithic AI model to handle every task, NewsGenie employs a coordinated team of specialised agents, each with a clearly defined role, orchestrated by the LangGraph workflow engine. The system addresses three core limitations present in conventional AI chatbots: The application is structured into five distinct layers: A naive chatbot architecture routes every user message to one AI model that simultaneously interprets, retrieves, generates, and formats. This design fails at scale for three reasons: 1. Intent ambiguity: The prompt "Tell me about Tesla" could mean: A single model guesses the intent based on tone and phrasing — an unreliable heuristic that produces mismatched outputs for roughly 30% of ambiguous queries. 2. Hallucination of recency: GPT-4o-mini has a training cutoff. Asking "What happened in the markets today?" to a monolithic chatbot risks receiving a plausible but entirely fabricated answer. Specialisation forces the news pipeline to always fetch real data. 3. Cascading failures: When a single model handles everything, one broken dependency (an API key, a rate limit) silently corrupts the entire response. A multi-agent system allows partial degradation — the general agent can still answer knowledge questions even while the news API is down. NewsGenie distributes responsibility across seven specialised agents: Each agent: This is the Single Responsibility Principle applied to AI pipelines. Like a hospital where the radiologist does not also dispense medication, each agent's scope is deliberately constrained. LangGraph is the orchestration framework that connects all agents into a directed, stateful workflow graph. Think of it as the conductor of an orchestra — it does not play any instrument itself, but it ensures every instrument plays at exactly the right moment, in exactly the right sequence. Developers define three components: The complete graph is assembled in The most important architectural decision in NewsGenie is that all inter-agent communication happens through a single shared state object — no agent ever calls another agent directly. At every stage, previously set fields remain untouched. The state accumulates rather than overwrites — an audit trail of every decision the pipeline made. Without memory, every user message is treated as the first message ever received. The exchange: User: What is inflation? Session memory gives the chatbot conversational continuity. The bot reads the previous exchange, understands that "it" refers to "inflation," and answers accordingly. One of the key features that makes NewsGenie feel like a true conversational assistant is its use of session memory. Instead of treating every query as an isolated request, the system retains a structured history of previous interactions within the session. This allows the chatbot to understand context-dependent follow-ups such as “describe more about it” or “why is it named that way?” without requiring the user to restate the original topic. By injecting recent chat history into the classification and response generation stages, NewsGenie enables coherent multi-turn conversations while balancing performance and cost through a sliding context window. This design ensures that the system remains both context-aware and efficient, delivering responses that feel natural, connected, and user-centric. Streamlit's built-in On the next user submission, the full Sending the entire conversation history to GPT on every request is expensive and risks exceeding context limits. NewsGenie uses a deliberate sliding window: The 6-message window covers three full turns (user + assistant × 3), which handles the vast majority of conversational follow-ups. Messages 7–10 remain visible to the user but are not sent to GPT — a cost-conscious trade-off that preserves UX without inflating API costs. Every user submission follows this exact sequence from button click to rendered result: User input: Dropdown = "finance", Text = "Why are interest rates rising?" Total API calls: 0 (classify) + 1 (GPT answer) + 1 (GNews) + 5 (GPT validation) = 7 calls NewsGenie treats every interaction as part of an ongoing conversation, not an isolated query. The Query classification uses two sequential passes, with the first pass handling the majority of requests at zero cost: Pass 1 — Rule-Based (instant, zero API calls): These two rules handle every structured UI interaction — dropdown selections with and without text. They require no API call, no network round-trip, and complete in microseconds. Pass 2 — LLM-Based (one GPT call, for free-text queries only): The GPT classifier uses When GPT returns a malformed or non-JSON response, the parse guard fires. Even if parsing succeeds, each field has a Defaulting to The Category-Prefixed Query Construction: This prevents duplicate category tokens. A topic of "technology AI chips" with category "technology" would otherwise produce "technology technology AI chips" — the guard catches this and passes the topic unchanged. Article Normalisation: GNews returns nested JSON objects. Each article is flattened to a consistent schema before being stored in state: This flat Data Transfer Object (DTO) is the contract between the news layer and all downstream consumers (validator, fallback, UI). The UI renders GNews results and DuckDuckGo fallback results identically because both conform to this schema. For every article returned by GNews, a separate GPT call evaluates credibility ( Core philosophy — show, never hide: If validation itself fails for an article (API error, malformed response), the default is All GPT calls flow through a single The Centralisation principle: All API configuration lives in The 10-second timeout on GNews is tighter than the GPT timeout because: NewsGenie does not propagate exceptions upward through the agent chain. Instead, agents communicate failure via the The routing function reads a clean enum, not a chain of exception handlers scattered across multiple functions. NewsGenie demonstrates that the quality of an AI application is determined primarily by architecture, not by the power of the underlying model. The same GPT-4o-mini model that powers a simple chatbot is here orchestrated into a seven-agent pipeline that handles intent classification, real-time retrieval, credibility validation, graceful degradation, and conversational memory — all with clean separation of concerns. The full source code, setup instructions, and configuration details for NewsGenie are available on GitHub. github.com/nselvar/NewsGenie NewsGenie — Complete Technical Report

Stack: Python · LangGraph · GPT-4o-mini · GNews API · DuckDuckGo · Streamlit. Session Memory

Date: March 20261. Introduction

1.1 Overview

1.2 System Goals

Goal Implementation Answer factual knowledge questions GPT-4o-mini general agent Fetch real-time categorised news GNews API with category prefixing Handle multi-turn conversations Chat history injected into every prompt Degrade gracefully on API failure DuckDuckGo fallback + composer messaging Classify intent reliably Rule-based + LLM-based two-pass classifier Present results cleanly Streamlit with custom CSS design system 1.3 High-Level Architecture

2. Why a Team of Agents?

2.1 The Problem with a Single AI

2.2 The Agent Team

NewsGenieStatemixed_* wrapper nodes, which are thin delegates that exist solely to give the mixed pipeline path unique LangGraph node names2.3 Benefits of the Multi-Agent Model

Concern Single-Agent Approach Multi-Agent Approach Debugging Hard — error anywhere in one large prompt Easy — each agent tested in isolation Modification Changing routing risks breaking answers Swap one node without touching others Cost optimisation All queries cost the same Rule-based routing avoids GPT for 60% of classifications Failure handling Total failure on API error Partial degradation, fallback paths active Scaling Bottleneck at one model Each path scaled independently 3. LangGraph — The Conductor

3.1 What LangGraph Does

3.2 Graph Construction

graph.py:graph = StateGraph(NewsGenieState)

# Register all agents as named nodes

graph.add_node("classifier", classify_query)

graph.add_node("general", general_agent)

graph.add_node("news", news_agent)

graph.add_node("validate", validate_news_agent)

graph.add_node("fallback", fallback_agent)

graph.add_node("composer", composer_agent)

graph.add_node("mixed_general", mixed_general_agent)

graph.add_node("mixed_news", mixed_news_agent)

graph.add_node("mixed_validate", mixed_validate_agent)

# Wire the execution paths

graph.add_edge(START, "classifier")

graph.add_conditional_edges(

"classifier",

route_after_classification,

{

"general": "general",

"news": "news",

"mixed_general": "mixed_general"

}

)

graph.add_edge("general", "composer")

graph.add_conditional_edges(

"news",

route_after_news,

{

"fallback": "fallback",

"validate": "validate"

}

)

graph.add_edge("validate", "composer")

graph.add_edge("fallback", "composer")

graph.add_edge("composer", END)

workflow = graph.compile()

3.3 The Three Execution Paths

4. NewsGenieState — The Shared Notepad

4.1 The Central Design Decision

NewsGenieState is defined as a Python TypedDict in state.py:

4.2 State Lifecycle

Phase Who sets it Fields populated Initialisation app.pyuser_query, selected_category, chat_historyClassification classify_queryquery_type, category, topicAnswer generation general_agentgeneral_responseNews fetch news_agentnews_results, api_status, error_messageValidation validate_news_agentvalidated_news_resultsFallback fallback_agentfallback_resultsComposition composer_agentfinal_response5. Session Memory

5.1 The Problem Session Memory Solves

Bot: Inflation is a general rise in price levels…

User: Why does it happen?

Bot: (Has no idea what "it" refers to)

5.2 Implementation

st.session_state dictionary persists for the lifetime of a single browser tab. After every successful response, app.py appends two entries:# app.py — history_label is the display text stored in chat history.

# For category-only requests (no text typed), a descriptive label is

# generated so the chat panel doesn't show an empty bubble.

history_label = effective_query if effective_query else f"Latest {st.session_state.selected_category} news"

st.session_state.chat_history.append({"role": "user", "content": history_label})

st.session_state.chat_history.append({"role": "assistant", "content": result["final_response"]})

chat_history list is included in the initial state passed to workflow.invoke().5.3 Context Window Management

# Inside classify_query and general_agent

history_text = "\n".join(

[f"{m['role']}: {m['content']}" for m in state.get("chat_history", [])[-6:]]

)

6. Complete Query Processing Flow

6.1 End-to-End Sequence Diagram

6.2 Worked Example — Mixed Query

Step Agent Action State Fields Written 1 classify_queryRule-based: category + query → mixed query_type="mixed", category="finance", topic="Why are interest rates rising?"2 mixed_general_agentGPT-4o-mini at temp=0.3 answers the question general_response="Interest rates are rising because..."3 mixed_news_agentGNews API: q="finance Why are interest rates rising"news_results=[5 articles], api_status="success"4 mixed_validate_agent5 separate GPT calls, one per article validated_news_results=[5 articles with credibility fields]5 composer_agentAssembles ### Answer\n...\n\n### Latest News\n...final_response="### Answer\n..."6.3 Query Type Decision Matrix

Dropdown Text Input Rule Applied Query Type Pipeline none "What is blockchain?" LLM classify general classifier → general → composer "technology" (empty) Rule 1: category only news classifier → news → validate → composer "finance" "inflation" Rule 2: category + text mixed classifier → mixed_general → mixed_news → mixed_validate → composer none "latest AI news" LLM classify → news news classifier → news → validate → composer 7. AI Chatbot Design

7.1 Conversation Management

7.1.1 The Role of Chat History

chat_history field in NewsGenieState carries all previous turns and is injected into the classifier and general agent prompts, enabling:7.2 Query Differentiation

7.2.1 The Two-Pass Classification System

# Pure category news request

if selected_category != "none" and not user_query.strip():

return {"query_type": "news", "category": selected_category, "topic": selected_category}

# Category + question = mixed request

if selected_category != "none" and user_query.strip():

return {"query_type": "mixed", "category": selected_category, "topic": user_query}

prompt = f"""

You are the Classifier Agent for NewsGenie.

Classify the user query into one of:

- general

- news

- mixed

Extract:

- category: technology / finance / sports / general

- topic: short phrase describing the topic

Conversation history:

{history_text}

User query:

{state["user_query"]}

Return ONLY valid JSON:

"""

temperature=0 — the model always selects the highest-probability classification, ensuring the same query always routes to the same pipeline. Routing decisions must be deterministic; outputs can be creative.7.2.2 Classification Failure Recovery

.get() default as a second safety net:try:

parsed = json.loads(raw)

except Exception:

parsed = {

"query_type": "general",

"category": "general",

"topic": state["user_query"]

}

return {

"query_type": parsed.get("query_type", "general"),

"category": parsed.get("category", "general"),

"topic": parsed.get("topic", state["user_query"])

}

"general" is deliberate: the general agent always produces a response (GPT always answers something), while "news" could fail due to API issues and "mixed" doubles the failure surface. The safest default is the path most likely to give the user something meaningful.7.2.3 Temperature Strategy

Agent Temperature Reason classify_query0.0 Deterministic routing — same input must always produce same route validate_news_agent0.0 Consistent credibility verdicts per article general_agent0.3 Natural variation makes answers feel conversational, not robotic 8. Real-Time News Integration

8.1 GNews API Integration

news_agent fetches up to five articles per query from the GNews API:url = "https://gnews.io/api/v4/search"

params = {

"q": search_query, # category-prefixed topic string

"lang": "en",

"max": 5,

"token": GNEWS_API_KEY

}

response = requests.get(url, params=params, timeout=10)

if category != "general" and category not in topic.lower():

search_query = f"{category} {topic}"

else:

search_query = topic

{

"title": "Fed holds rates steady amid new inflation data",

"description": "The Federal Reserve voted 9-1 to...",

"source": "Bloomberg",

"url": "https://bloomberg.com/...",

"publishedAt": "2026-03-15T14:30:00Z",

"image": "https://..."

}

8.2 Credibility Validation

validate_news_agent in agent_nodes.py):prompt = f"""

You are a fact-checking assistant. Evaluate the following news article

headline and description for signs of misinformation, sensationalism,

or unreliability.

Title: {article.get('title', '')}

Description: {article.get('description', '')}

Source: {article.get('source', '')}

Respond ONLY with valid JSON:

"""

credible=True with no badge. During any API hiccup, it is less harmful for one suspicious article to slip through than for all articles to be falsely flagged as suspicious.

9. Workflow and Error Handling

9.1 API Integration Architecture

9.1.1 OpenAI Client Configuration

OpenAI client instance configured in config.py:import os

from openai import OpenAI

# Both env vars are set for compatibility with different SDK versions

os.environ["OPENAI_BASE_URL"] = "https://openai.vocareum.com/v1"

os.environ["OPENAI_API_BASE"] = "https://openai.vocareum.com/v1"

os.environ["OPENAI_API_KEY"] = "<api-key>" # set at deploy time

client = OpenAI(timeout=30.0)

# GNews key: reads env var first, falls back to default

GNEWS_API_KEY = os.environ.get("GNEWS_API_KEY", "<gnews-key>")

timeout=30.0 setting ensures that a slow or unresponsive GPT endpoint raises a Timeout exception after 30 seconds rather than blocking the application indefinitely. GPT-4o-mini typically responds within 2–8 seconds; the 30-second ceiling accommodates peak-load scenarios while still protecting users from perpetual spinners.config.py. Every module importing from it receives the same client and key. When credentials need to rotate, one file changes — nothing else.9.1.2 GNews Request Configuration

response = requests.get(url, params=params, timeout=10)

9.2 Signal-Based Error Propagation

api_status field — a deliberate design choice that keeps routing logic clean and predictable:Exception-based: Signal-based (NewsGenie):

try: result = news_agent(state)

news = fetch_news() # result["api_status"] can be:

except APIError: # "success" → go to validate

use_fallback() # "failed" → go to fallback

except Timeout: # "no_results" → go to fallback

use_fallback()

10. Full Execution Flow

14. Conclusion

14.1 What NewsGenie Demonstrates

15. Demo

March 2026